I’ve been exploring a question that started as a personal frustration.

I wanted a way to turn voice memos into high-level thought leadership content. Not just raw transcripts, but actual formatted LinkedIn posts, deep-dive blog articles, punchy threads. As a career technical writer pivoting into AI consulting, I knew I needed to build visibility. But I didn’t have a traditional dev background to build a custom app.

What I needed was a system that could think like an editor but run like a machine.

Bottleneck

The problem wasn’t capturing ideas. I could record a voice memo anytime. The problem was what happened next.

Raw transcripts are rarely usable as-is. They need structure and formatting. They need to be adapted for different platforms. What works on LinkedIn doesn’t necessarily work as a Twitter thread. And doing that manually across multiple formats is time-intensive enough that it often just doesn’t happen.

In my experience, this is where good ideas stall. Not in the thinking, but in the production layer between thought and publication.

The opportunity I saw was this: if you could automate the editorial work, the structuring, the format adaptation, the distribution, you could remove the friction that prevents consistent output.

What I built

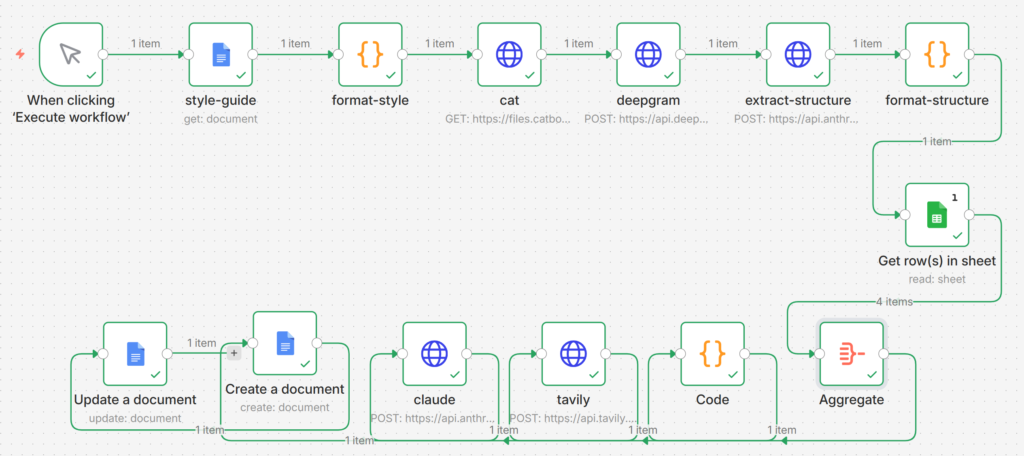

The framework I landed on is what I call the Velocity Engine. I built it using n8n, a visual workflow builder, as the central nervous system. I hooked in Deepgram for transcription, Tavily for real-time web research, and Claude for the core writing.

The flow is surprisingly elegant:

- Raw audio comes in

- It’s transcribed

- The system extracts a logical structure

- It pulls from my personal wisdom library

- It runs a research scan

- Claude writes four distinct outputs

- Everything lands formatted in a single Google document

What defines this approach is that each tool does what it does best. Deepgram handles audio. Claude handles editorial judgment. n8n orchestrates the handoffs.

I’m not writing code. I’m designing a workflow visually.

What broke

Building this wasn’t smooth. A few things failed hard.

The 4x execution bug. My workflow pulled wisdom quotes from a Google Sheet with 4 rows. n8n ran the entire workflow 4 times — once per row. I ended up with 4 Google Docs instead of 1. The fix: an Aggregate node that combines rows before passing them forward.

JSON errors. Special characters in the style guide — quotes, line breaks, tabs — broke the Claude API call. “Bad control character in string literal at position 6688.” I added Code nodes to strip problem characters before sending anything to Claude.

Slop output. Early drafts were generic. Unearned claims like “most companies are doing it wrong.” No clear thesis. The content wandered. I added a thesis rule to the prompt: ONE core argument, 3-5 supporting points, no claims the transcript doesn’t support.

Harsh tone. Even with better structure, the writing felt preachy. “The truth is…” and “You need to…” I added softening rules: replace absolutes with “I’m noticing…” and “In my experience…”

Each fix took 30-60 minutes of debugging. But each one taught me something about how these systems actually work.

What matters

What’s interesting to me is the accessibility barrier that’s quietly dissolving.

Building custom content solutions used to require dev skills you might not have. But no-code workflow builders lower that barrier significantly. You can orchestrate powerful AI without writing a single line of code.

You’re stacking existing tools visually rather than building from scratch.

This suggests something larger: that the gap between “I have an idea” and “I have a functioning system” is narrowing for non-technical practitioners. The constraint isn’t access to capability anymore. It’s knowing how to think architecturally about workflows.

Editorial architecture

What I’ve learned is that a well-designed pipeline forces clarity.

The structure I settled on — transcribe → structure → enrich → write → format — mirrors editorial thinking. It’s not just automation. It’s a system that asks the same questions a good editor would ask:

- What’s the core argument?

- What evidence supports it?

- How should this be structured for this audience?

- What format does this idea deserve?

In my experience, ad-hoc content creation lacks this consistency. A pipeline makes the thinking repeatable.

Friction remains

The transcript cuts off before the reality check section, but I’d argue the honest question is always: what breaks?

Systems like this don’t eliminate editorial judgment. They accelerate it. You still need to feed the system good prompts. You still need to review outputs. You still need to know what good looks like.

What you’re removing is the manual reformatting work. The copy-pasting across platforms. The starting-from-scratch every time.

You’re not replacing the editor. You’re giving them leverage.

What’s next

Here’s what I’m noticing: when the friction between idea and output drops low enough, consistency becomes possible.

Not perfection. Not viral hits every time. But the ability to maintain a steady drumbeat of clear, structured thinking across multiple channels.

For someone building visibility in a new field, that consistency compounds. The Velocity Engine isn’t magic. It’s leverage applied to the part of the process that used to slow everything down.

The question I’m sitting with now is: if a non-technical practitioner can build this, what does that mean for how we think about content production more broadly?

Maybe the future isn’t custom apps built by dev teams. Maybe it’s practitioners designing editorial systems visually, using tools that already exist.